WHERE event_time BETWEEN now() - interval '51 weeks' AND now() GROUP BY date ORDER BY date Index Cond: ((account_history.event_time >= (now() - '357 days'::interval)) AND (account_history.event_time EXPLAIN ANALYZE VERBOSE SELECT date_trunc('week', event_time) AS date, count(DISTINCT account) FROM account_history Output: account, event_code, event_time, descriptionĪnd using the same time range clause takes less than one second: account=> EXPLAIN ANALYZE VERBOSE SELECT COUNT(*) FROM account_history WHERE event_time BETWEEN now() - interval '51 weeks' AND now() Īggregate (cost=9357.58 rows=1 width=0) (actual time=997.436.997.436 rows=1 loops=1) Index Cond: ((account_history.event_time >= (now() - '357 days'::interval)) AND (account_history.event_time EXPLAIN ANALYZE VERBOSE SELECT COUNT(*) FROM account_history Īggregate (cost=9741.05 rows=1 width=0) (actual time=551.179.551.179 rows=1 loops=1) Output: (to_timestamp(to_char(date_trunc('week'::text, event_time), 'IYYY-IW'::text), 'IYYY-IW'::text))::date, account Sort Method: external merge Disk: 40688kB

Sort Key: ((to_timestamp(to_char(date_trunc('week'::text, account_history.event_time), 'IYYY-IW'::text), 'IYYY-IW'::text))::date) Output: ((to_timestamp(to_char(date_trunc('week'::text, event_time), 'IYYY-IW'::text), 'IYYY-IW'::text))::date), account Group Key: ((to_timestamp(to_char(date_trunc('week'::text, account_history.event_time), 'IYYY-IW'::text), 'IYYY-IW'::text))::date) Output: ((to_timestamp(to_char(date_trunc('week'::text, event_time), 'IYYY-IW'::text), 'IYYY-IW'::text))::date), count(DISTINCT account) However, it only reduced the execution time by 25%: account=> CREATE INDEX account_history_time_idx ON account_history (event_time DESC) Īccount=> EXPLAIN ANALYZE VERBOSE SELECT to_timestamp(to_char(date_trunc('week', event_time), 'IYYY-IW'), 'IYYY-IW')::date AS date, count(DISTINCT account) FROM account_history WHERE event_time BETWEEN now() - interval '51 weeks' AND now() GROUP BY date ORDER BY date Update: When I add an index over only the timestamp, that index is used. Is there a better way to index this table, or is there something inherent in my query that is bypassing both of these indices? I tried adding an ordered index as recommended here, but that is clearly not being used in the execution plan either.

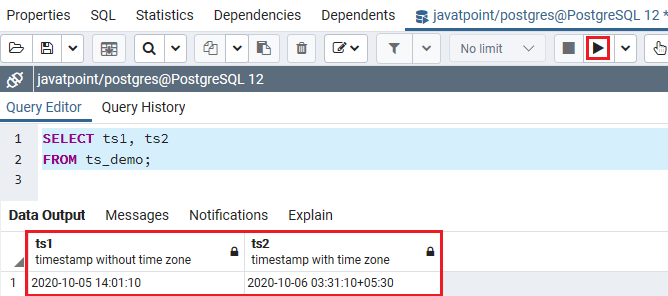

However, now the table has grown into the millions, I've noticed a performance issue with the query, and discovered that the index is not being used in the query. When I originally created this table, I added the timestamp column as part of a btree index, but I figured that the sequential scan was due to the (then) small number of rows in the table (see related question). "event_code_fk" FOREIGN KEY (event_code) REFERENCES domain_account_event(code) ON UPDATE CASCADE ON DELETE RESTRICT "account_fk" FOREIGN KEY (account) REFERENCES account(id) ON UPDATE CASCADE ON DELETE RESTRICT "account_id_idx" btree (account, event_code, event_time) "account_history_idx" btree (account, event_time DESC) +-+-Įvent_time | timestamp without time zone | not null default now()ĭescription | text | not null default ''::text

Sort Key: ((to_timestamp(to_char(date_trunc('week'::text, event_time), 'IYYY-IW'::text), 'IYYY-IW'::text))::date) Group Key: ((to_timestamp(to_char(date_trunc('week'::text, event_time), 'IYYY-IW'::text), 'IYYY-IW'::text))::date) account=> EXPLAIN ANALYZE SELECT to_timestamp(to_char(date_trunc('week', event_time), 'IYYY-IW'), 'IYYY-IW')::date AS date, count(DISTINCT account) FROM account_history WHERE event_time BETWEEN now() - interval '51 weeks' AND now() GROUP BY date ORDER BY date The (current) bottleneck is the sequential scan over the timestamp range. The table currently has nearly 5 million rows and this query currently takes 8 seconds to execute. I have a slow query that generates a report of account activity per week over the past year.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed